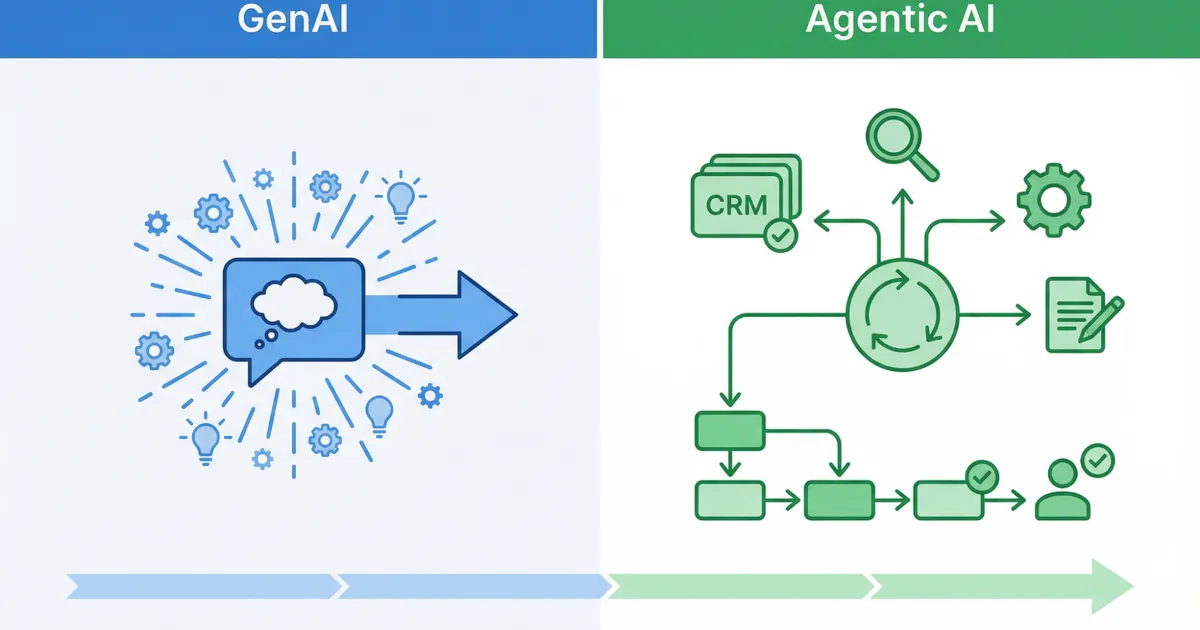

Generative AI has shown many teams how quickly text, ideas, and summaries can be produced. The next step is AI agents: systems that do not just generate content, but pursue goals and complete tasks across multiple steps using tools, data sources, and workflows.

For agents to create real value in day-to-day work instead of merely looking impressive, teams need a different setup than they needed for pure prompting. Here are the ten most important changes required when moving from GenAI hype to agent-based, controlled execution.

1. Shift the goal: from answers to outcomes

GenAI usually produces an output: a text, a list, or an idea. Agents work toward outcomes and improve iteratively. They plan, act, check, and correct.

Team shift: Stop asking only, “Can AI write this?” Start asking, “Can AI deliver the outcome, including the intermediate steps and a clean handoff?“

2. Define agent jobs instead of AI playgrounds

Agents work best when they have a clearly defined job. Typical examples include:

- pre-qualifying inquiries

- providing status information from a knowledge base

- making documentation searchable

Team shift: Give each agent one job, one expected output, and one defined handoff point to humans or systems.

3. Set limits: autonomy is not a solo act

Agents should act, but not act wildly. In practice they need guardrails: approvals, limits, escalation paths, and clear no-go zones.

Team shift: Define exactly what the agent may do on its own, what requires approval, and when it must hand over to a human.

4. Make your knowledge agent-ready

The most common failure is not the model. It is the knowledge layer. If agents do not receive clean, approved, and maintainable knowledge, teams get exactly what they want to avoid: uncertain answers, follow-up questions, and processes that sound convincing but are not reliable.

Team shift: Build the knowledge foundation first, then let the agent work on top of it. In practice that means defining sources, structuring content, and treating updates as a process.

5. Decide which data may go in and which must never go in

As agents become more useful, the risk rises if data rules stay vague. Under the EU AI Act, the AI literacy obligation in Article 4 has applied since February 2, 2025, and broader core obligations become widely applicable on August 2, 2026.

Team shift: Use simple data classes such as green, yellow, and red. Define clear rules and document them briefly.

6. Connect agents to systems, but only where it creates impact

Agents become valuable when they move real workflows: CRM, ticketing, knowledge bases, calendars, or communication channels. At the same time, every integration creates a new maintenance and risk surface.

Team shift: Start with one or two integrations that produce immediate value, such as a knowledge base plus a communication channel. Scale only after the setup proves stable.

7. Build in control: monitoring, quality, and logs

Agents without measurement are guesswork. You need visibility into:

- answer quality

- error rates

- handoffs to humans

- frequent questions or intents

- actual time savings

Team shift: No rollout without minimum metrics and logs. Visibility is what makes improvement systematic.

8. Standardize prompts and playbooks

If every team member prompts in their own way, results will not scale. Agents need playbooks:

- this is how we qualify leads

- this is how we answer status questions

- this is how we escalate sensitive cases

Team shift: Less creative prompting, more repeatable quality through standards, templates, and defined workflows.

9. Train the team on AI literacy: short, practical, repeatable

AI literacy does not mean everyone needs to become an expert. It means everyone should understand:

- opportunities and limits

- safe usage

- common mistakes

- escalation paths

Team shift: A 60 to 90 minute foundation session plus short refreshers is usually more effective than a large, rarely applied training program.

10. Roll agents out like a product

Agents only become real team leverage when they are reliable. That is why they should not be treated like one-off projects, but like products:

- pilot for two to four weeks

- feedback and refinement

- rollout by team or use case

- continuous improvement

Team shift: An agent is not a one-time launch. It needs ownership, measurement, and iteration.

What this looks like in daily work: three fast examples

Sales

An agent qualifies leads, asks five standard questions, routes hot leads to the team, and can prepare the next appointment step.

Customer service

An agent answers standard questions from the knowledge base, reduces status and delivery-time inquiries, and escalates exceptions cleanly.

Internal knowledge

An agent makes documents searchable, answers questions such as “How does process X work?”, and responds from approved sources instead of assumptions.

Conclusion

If you want to move from GenAI as a tool to AI agents as a working model, you need more than good prompts. You need clearly defined jobs, controlled workflows, clean human handoffs, and a knowledge base your teams can actually trust.

LIVOI supports exactly that approach: agent-based assistance aligned with your processes, tailored to your concrete use case, and built to take over recurring work instead of just generating answers.